Note to my reader, I’ve moved the Playlist section bottom of the posts.

Product reviews are in progress, but before I publish any of them I need to decide how to represent how I rate products and what criteria to use for the final verdict.

I found myself wondering just what kind of rating system you folks prefer.

12-point dog rating, using my son’s Boston Terrier icon?

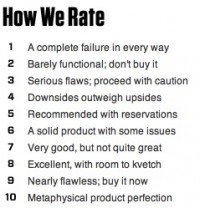

What criteria do need to see to understand how a rating system works? Do you like the overall product scale from 1 to 5 star, thumbs, or whatever icons sites use? (A lovely 5-tree example resides on Jennifer Chiat’s, Growing a Green Family blog.) Or, do you prefer separate ratings for build quality (or manufacturing), hardware design or software interface design, product usefulness, level of user, or other attributes that escape me at this moment. Or, do you prefer Wired’s style, in which they have a complex system of whether you should spare your dime for a product, as shown below?

Source: Screen shot from Wired’s “Gear of the Year” page.

Personally, I don’t like the thumbs up or down, or any two criteria system. For example, I often recommend Adobe Photoshop Elements to new photographers who want to edit their shots. Yet, I prefer Photoshop or Lightroom, because I am not crazy about Elements interface. So, personally, the product is a nay, but publicly, it’s a yea.

There is also the problem where a product is made well, serves its purpose, but doesn’t meet the specific reviewer’s needs and may or may not meet your needs. In a 5-point rating system, that product might only get 3.5 points. I don’t particularly like the 2 point or the 5 point systems.

In articles I’ve read, a rating system is what serves to build a reputation. If I rate products inconsistently, then you don’t trust what I have to say and therefore it hurts my reputation as a product reviewer. So, that’s no good.

What I really need to know is: What rating system do you prefer (and can I implement it). Thank you, in advance, for any comments you have.

Playlist (i.e. music to read by) – Easy listening

SuperTramp. “Even In The Quietest Moments.” Gold – Retrospectacle – The Supertramp Anthology. 2005, A&M Records.

Commodores. “Sail On.” All The Great Hits. 2007, Motown Records.

Los Angeles Philharmonic. “Appalachian Spring – (Ballet for Martha).” Copland: Appalachian Spring. 2009, Deutsche Grammophon GmbH, Hamburg.

(Disclaimer: The music links have my Amazon Associates code embedded in them, so anything you buy after you click results in my getting a small kickback. Thank you!)

A calibrated system like Wired’s works well for telling the reader what the score means, and it’s good that 5 is “average”: like a C grade, which isn’t a fail, but generally means basically competent. Rating systems beyond that start to get a bit bogus: Pitchfork’s 0.0 – 10.0, say: is an album really a 7.2 or a 7.3? More helpful is an indication of the intended user: not all products are for everyone, and it’s fair to say “This is a good product, but not for you if___”. I’m not my mother, brother, or colleague, so a product for me may not be for them, and vice versa. Taking that into account in the review is illuminating all ’round.

LikeLike

I like a 10 “unit” spread. But maybe one from -5 to +5 with a 0 as a neutral score. This way a negative review can really be negative. It also helps to list pro’s and con’s of products. I also like more that 1 scale to make the review more meaningful. I am sure the vendors would also appreciate this type of review.

LikeLike

Hi Ilene,

I agree that consistency is key, as is trust in the reviewer for a given demographic (does the writer’s tastes match the reader’s in substantive ways).

When a reviewer offers both a break down of the desirable characteristics found and the challenges experienced, it helps a reader far more than a simple “reviewer’s choice” or snapshot judgment because we often weight attributes differently when we search for a product. For example, I may be willing to trade off crappy interface for an unexpectedly wonderful feature, while someone else might consider a steep learning curve a deal breaker. Another helpful factor in a good review is brevity: it seems like fewer folk are willing to read more than a paragraph or two these days.

So, I generally look for a non-wordy review, written by someone whose taste I trust, who gives me a clearly stated description or list of good and bad attributes. Congrats on the new blog

My two cents – your mileage may vary.

Sandy

LikeLike

I guess I like seeing a 5 star rating on varied criteria, eg.,

Ease of Install *****

User Interface ***

Features **

Value ***

etc., etc., as appropriate to the product.

A box with such info, along with an overall rating would be useful to me in a review… Perhaps who the target audience (consumer or professional) could be indicated at the top?

LikeLike